Your GPUs Aren’t Slow. They Just Have a Short Memory.

Graid Technology is on a mission to fix AI’s short memory problem; starting with KV Cache.

You know the feeling: you walk into a room and forget why you came. You retrace your steps, reconstruct your train of thought, and try to remember what you were looking for. That's exactly what your AI is doing every time KV cache gets evicted; retracing its own reasoning from scratch, burning time and compute just to get back to where it already was.

__________________________________

There’s a comfortable myth in AI infrastructure: storage is an afterthought. GPUs do the work, and everything else just keeps up. That assumption held when AI meant single-shot inference. It breaks completely when AI means agentic workloads, models that run for hours, coordinate across agents, and maintain millions of tokens of active context without ever resetting state.

The mechanism that makes this possible is the KV cache: the model’s working memory, storing the keys and values from every previous token so the model doesn’t have to recompute what it already knows. When that cache overflows GPU HBM, it must go somewhere. And where it goes determines whether your AI system performs or quietly falls apart.

The Overflow Problem Is Worse Than You Think

The infrastructure metrics are bad enough: Time to First Token latency spikes up to 18x. Throughput drops 10x. GPU utilization craters to 50%; your most expensive hardware wasting cycles, recomputing tokens. But the model-level consequences are harder to detect and more damaging. Evicted KV cache means lost context. Lost context means hallucinations, contradictions, and reasoning that degrades mid-task without any visible error. For an autonomous agent running a multi-hour workflow, a single cache eviction event can silently corrupt the entire session.

The instinctive response is to add more GPUs. It doesn’t work; more GPUs increase KV cache demand on the same storage tier, making overflow worse. DRAM offloading preserves context but is prohibitively expensive at scale. Standard NVMe offloading is cheaper but too slow to serve KV cache at inference speed. Neither was designed for this problem. Agentic AI needs a storage tier built for KV cache.

“For the very first time, your storage system will be GPU-accelerated.” — Jensen Huang, GTC 2025.

Jensen Huang predicted it at GTC 2025.

NVIDIA architected it at GTC 2026.

Graid Technology delivers it today.

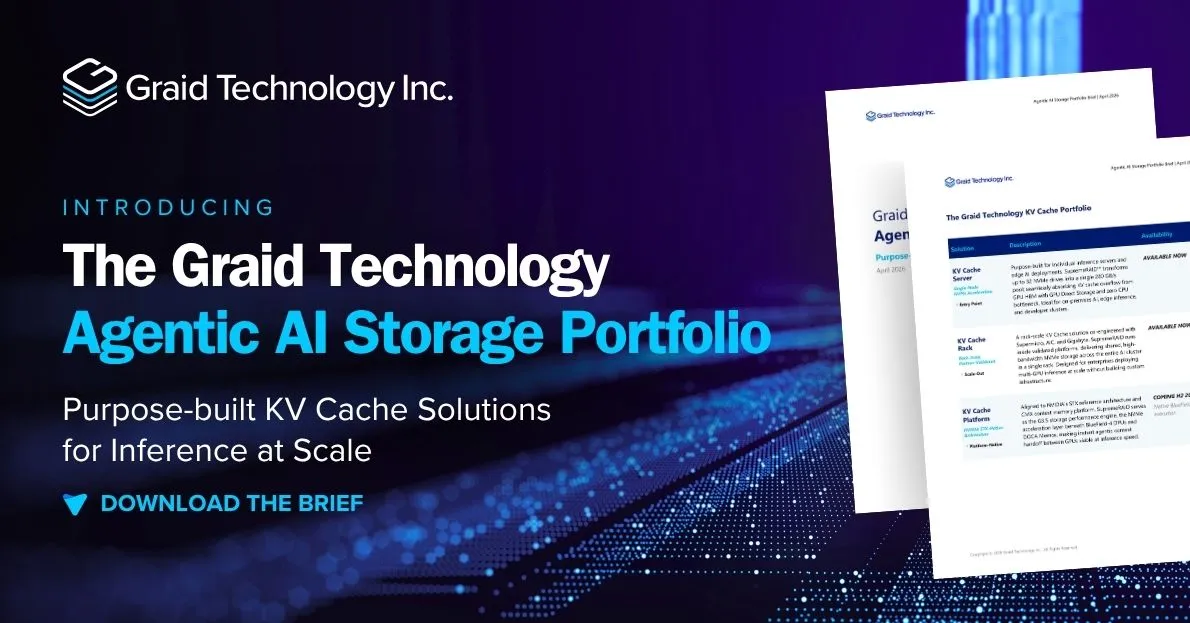

A Portfolio Built for This Moment

Graid Technology's KV Cache portfolio solves this at every deployment scale. The KV Cache Server accelerates a single inference node, aggregating up to 32 NVMe drives into a 280 GB/s pool with GPU Direct Storage, cutting KV cache read latency from 100ms to 1.3ms — 77x faster — with no CPU in the data path. The KV Cache Rack scales this to the full rack, co-engineered with leading server OEM partners as validated platforms for enterprise multi-GPU deployments. The KV Cache Platform aligns natively to NVIDIA's STX reference architecture, serving as the high-performance NVMe storage layer that makes instant agentic context handoff between GPUs viable at production speed.

On the roadmap: Native BlueField-4 execution that expands SupremeRAID's deployment model from GPU-adjacent to DPU-native; giving infrastructure teams a fully integrated STX storage node without a discrete accelerator and expanded drive count support to deliver rack-scale throughput from a single SupremeRAID instance spanning multiple STX nodes.

Better Performance. Lower TCO. Same Hardware.

The teams that solve the KV cache bottleneck first will run more agents, serve more users, and do it without overprovisioning GPUs or expanding expensive DRAM. NVMe-based acceleration at 280 GB/s delivers HBM-class performance at storage-tier economics. Better performance and lower TCO are not a tradeoff; they’re the same outcome with the right architecture.

Want the full picture?

Download the Graid Technology Agentic AI Storage Portfolio Brief for the complete technical narrative, deployment architecture, NVIDIA STX compatibility FAQ, and roadmap details.

→ Read the Solution Brief

→ Read the Press Release

→ Read about Graid Technology's approach to AI

.jpg)

.jpg)