Your GPUs Aren't Slow. They Just Have a Short Memory.

Your AI doesn't have a compute problem. It has a memory problem. Agentic workflows, multi-step reasoning, and inference at scale all depend on KV cache — but the storage tier beneath your GPUs was never built for it. Token volume is growing exponentially — LLM APIs can drive ~50 trillion tokens a day! Agentic AI demands a million times more. Yesterday's infrastructure won't get you there. Graid Technology will.

When KV Cache Overflows, Everything Breaks

Ignore it, and the performance impact is staggering. Time to First Token spikes 18x.Throughput drops 10x. GPU utilization craters to 50% — your most expensive hardware is burning cycles on recomputation. The hidden damage is worse. Evicted context means hallucinations, contradictions, and silent reasoning failures. For an agent running a multi-hour workflow, one eviction corrupts the entire session. No error. No warning. No recovery.

Where KV Cache Overflow

Breaks Production AI

The KV cache bottleneck is not a fringe edge case. It is a structural failure point in every agentic AI deployment running long context, multi-step reasoning, or concurrent inference at scale. These are the four scenarios where it surfaces most visibly.

Agentic Coding & Dev Automation

Autonomous coding agents maintain active context across multi-hour sessions — reading codebases, running tests, and iterating without resetting state. A single HBM overflow event mid-session corrupts the entire task. SupremeRAID™ provides persistent, protected KV cache storage that keeps long-running agents on task from first token to final output.

Enterprise Document Reasoning

Document processing agents that reason across thousands of pages in a single unbroken thread generate KV cache volumes that GPU HBM cannot hold alone. SupremeRAID™ absorbs the overflow at NVMe speed, preserving full document context without eviction, hallucination risk, or reasoning degradation.

High-Concurrency Inference

At just three simultaneous users, Llama 3-70B on an H100 80GB requires 120GB of KV cache — overflowing HBM entirely. SupremeRAID™ scales to handle production concurrency without latency penalties, keeping Time to First Token predictable under real-world load.

Multi-Agent Coordination

Enterprise automation and scientific research platforms run networks of specialized agents, each holding its own context while drawing on a shared memory pool. SupremeRAID™ delivers the bandwidth and fault tolerance required to sustain coordinated multi-agent workloads at scale.

The Wrong Instincts: More GPUs Won't Save You

Adding GPUs doesn’t fix the problem — it only makes it worse. Each GPU drives more KV cache into a tier that's already overflowing. DRAM offloading works, but costs more than the GPUs it protects. Legacy NVMe is cheaper, but too slow for inference speed. Neither was built for this workload. The fix: NVMe architected for KV cache — fast enough to feed the GPU, resilient enough to protect the session.

Storage Built for Agentic AI.

At Every Scale.

SupremeRAID™ aggregates up to 32 NVMe drives into a single 280 GB/s pool, bypasses the CPU via GPU Direct Storage, and cuts KV cache read latency from 100ms to 1.3ms, a 77x improvement. Explore how our KV Cache portfolio delivers this to every deployment, at scale.

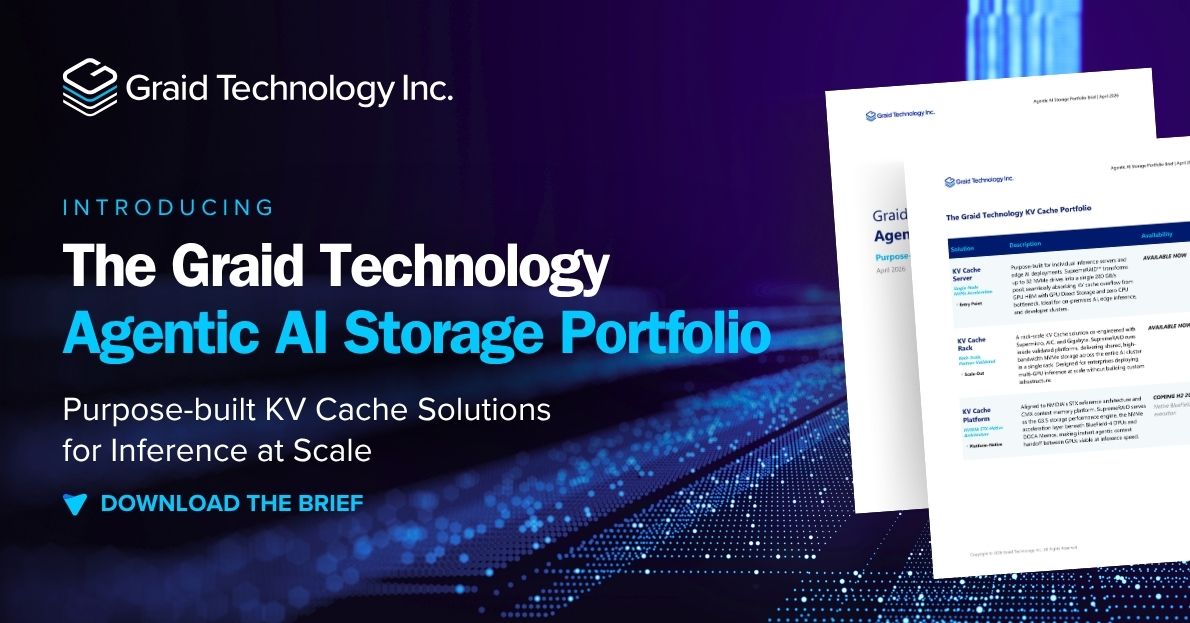

KV Cache Server

Single-Node NVMe Acceleration

Purpose-built for individual inference servers and edge AI deployments. SupremeRAID™ transforms up to 32 NVMe drives into a single 280 GB/s pool, absorbing KV cache overflow from GPU HBM via GPU Direct Storage with zero CPU bottleneck. Ideal for on-premises AI, edge inference, and developer clusters. Available now.

KV Cache Rack

Rack-Scale, Partner-Validated

Co-engineered with leading server OEM partners. SupremeRAID™ runs inside validated platforms, delivering shared high-bandwidth NVMe storage across an entire AI cluster in a single rack. Designed for enterprises scaling multi-GPU inference without building custom infrastructure. Available now.

KV Cache Platform

NVIDIA STX-Native Architecture

Aligned to NVIDIA's STX reference architecture and CMX context memory platform. SupremeRAID™ serves as the G3.5 storage performance engine beneath BlueField-4 DPUs and DOCA Memos, enabling instant agentic context handoff between GPUs at inference speed. Native BlueField-4 execution available H2 2026. Expanded drive count Q4’26

KV Cache Acceleration at Storage Economics

NVMe-based KV cache offloading delivers HBM-class read performance at a fraction of the cost — no DRAM expansion, no GPU overprovisioning, no rebuild tax after drive failures. The Graid Technology KV Cache portfolio replaces a series of infrastructure compromises with a single purpose-built solution.

Eliminate GPU Overprovisioning

Adding GPUs to compensate for a storage bottleneck makes the problem worse — each additional GPU increases KV cache demand on the same storage tier. SupremeRAID™ removes the constraint, so GPU capacity is sized for inference workload, not to offset I/O limitations.

77x Faster KV Cache Reads

SupremeRAID™ delivers KV cache reads at 1.3ms versus 100ms or more with standard NVMe. 280 GB/s of aggregate bandwidth across 32 drives matches the overflow rates that production inference clusters generate — with no CPU in the data path.

Keep GPUs Above 90% Utilization

When KV cache spills to unaccelerated storage, GPU utilization falls to 50% or below. SupremeRAID™ feeds the GPU directly via GPU Direct Storage, eliminating the idle cycles that inflate infrastructure cost and degrade user-facing latency.

A Clear Path from Server to Rack to Platform

Start with a single KV Cache Server for an individual inference node. Scale to a KV Cache Rack for shared cluster deployment. Align to NVIDIA's STX architecture with the KV Cache Platform — all on a common SupremeRAID™ technology core, with no re-architecture required.

The Teams That Solve It First Win

Agentic AI isn't a future event. It's reshaping production infrastructure today. Teams that solve the storage layer first will deploy more agents and serve more users on the hardware they already own — and spend far less doing it. Better performance and lower TCO aren't a tradeoff. With Graid Technology, they're the same outcome.